PA 4: Multitrack Music Generation

PAT 464/564: Generative AI for Music and Audio Creation (Winter 2026)

Due at 11:59pm ET on Apr 13

Instructions

- Please remember to submit your code. You will receive zero credit if the code is missing.

- All assignments must be completed on your own. You are welcome to exchange ideas with your peers, but this should be in the form of concepts and discussion, not in the form of writing and code.

- Provide proper citations/references for any external resources you use in your writing and code.

- Submit your work to Gradescope.

- Late submissions will be accepted for up to a week with 1 point deducted per day.

Multitrack Music Generation

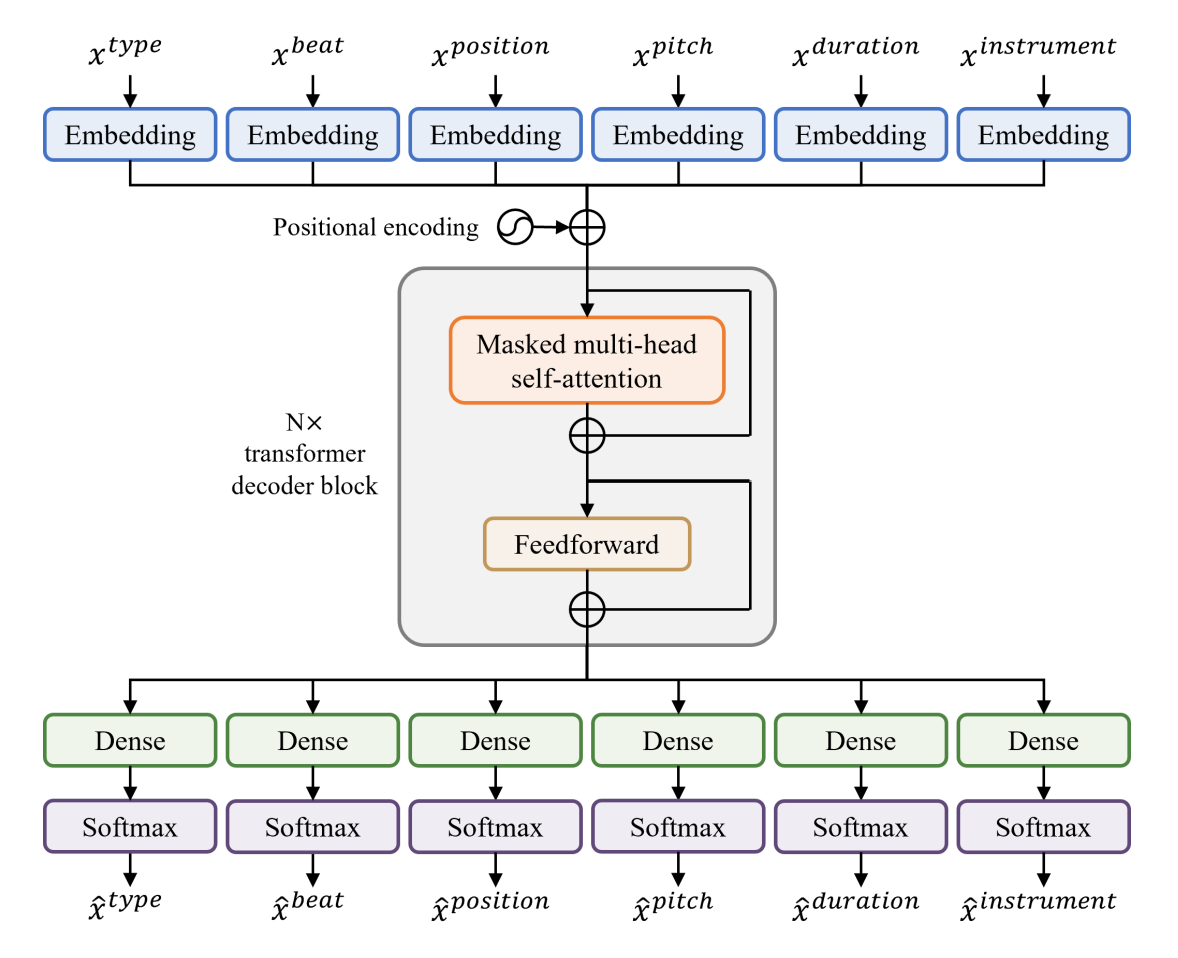

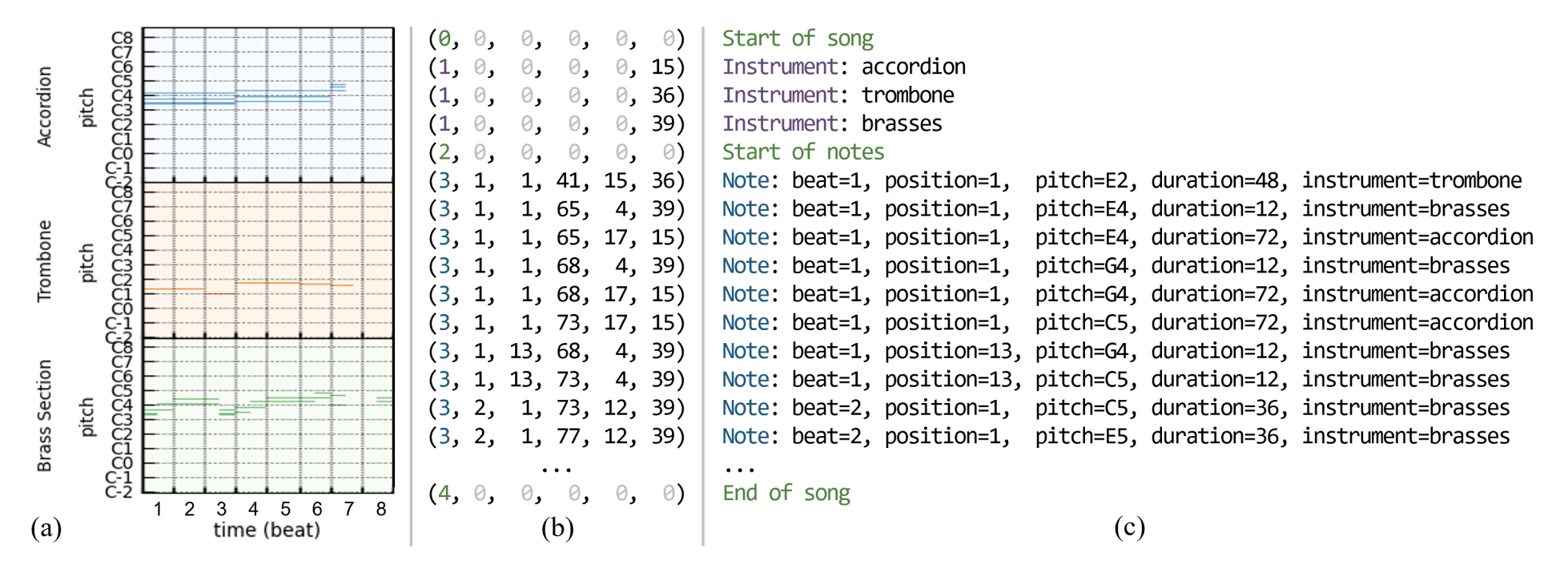

In this assignment, you will train a transformer model for multitrack music transformer that can generate multi-instrument music. We will be using the Lakh MIDI dataset (Raffel, 2016). The LMD dataset is a collection of 176,581 unique MIDI files, 45,129 of which have been matched and aligned to entries in the Million Song Dataset. Specifically, we will use a cleaner subset (Dong et al., 2018) consisting of 21,425 files. We will base our model on the Multitrack Music Transformer framework (Dong et al., 2023). To simplify the task, we will group MIDI instruments into five tracks: piano, guitar, bass, strings, and brass.

Here’s the representation we are using in this assignment:

Pretrained Model Checkpoint

In case you lost the trained model, here is a checkpoint for you to play with. This checkpoint was trained for 35 epochs, where it reached the lowest validation loss in a 50-epoch training session.

Links to the Jupyter Notebook

References

- Colin Raffel, “Learning-Based Methods for Comparing Sequences, with Applications to Audio-to-MIDI Alignment and Matching,” PhD Dissertation, Columbia University, 2016.

- Hao-Wen Dong, Wen-Yi Hsiao, Li-Chia Yang, and Yi-Hsuan Yang, “MuseGAN: Multi-track Sequential Generative Adversarial Networks for Symbolic Music Generation and Accompaniment,” AAAI, 2018.

- Hao-Wen Dong, Ke Chen, Shlomo Dubnov, Julian McAuley, and Taylor Berg-Kirkpatrick, “Multitrack Music Transformer,” ICASSP, 2023.